Robo-Cops: AI Is Coming For Your Local Police

As Northwest police departments adopt artificial intelligence, some see a dystopian nightmare. Others see an inevitable future

The detective woke up strapped to a steel chair. A woman with a flat expression and blonde hair combed back behind her ears looked on.

“Detective Raven, I’m Judge Maddox,” she said. “You’re before this court today, charged with the murder of your wife.”

The detective, played by actor Chris Pratt, spends the next 90 minutes of the 2026 film Mercy trying1 to convince Maddox, an autonomous artificial intelligence judge, of his innocence. The film is set in a bizarre future where the American public has accepted that people charged with crimes are guilty until proven innocent. If someone cannot prove their innocence, they are summarily executed.

The artificial intelligence judge allows Pratt to use a wide array of state surveillance tools to prove his case. He tracks down alternate suspects in his wife’s death using license plate readers, cellphone tracking, police body cameras, even an Instagram account.

Mercy shows a dystopian future. But in real life, Jumana Musa, director of the National Association of Criminal Defense Lawyers’ Fourth Amendment Center, said she didn’t see the future when she watched the movie.

“The thing that was most disturbing about that film — because I sat there taking notes while I was watching it — I’d say 90% of the things that are in there are already in use,” she said.

Facial recognition. Doorbell cameras. Intercepted cellular signals. These are all existing police surveillance tools Musa sees regularly in her work as a lawyer.

“It was meant to be this futuristic whatever, but it was actually like - without the AI judge and some of the things they could access – pretty much like a real-time crime center,” Musa said.

Throughout 2025, cities in the Northwest like Olympia, Lynnwood, Eugene and Woodburn showed some skepticism over AI tools, pulling the plug on automated license plate reading cameras operated by Flock Safety due to privacy concerns.

A recent survey by The Western Edge of Northwest cities, police departments and prosecutors found wildly differing views on AI’s future in policing. In some municipalities, prosecutors speak of AI in terms that evoke scenes from Mercy, pointing at ways the technology “hallucinates” fictions that carry human consequences. And, yet, in other cities, officers have threatened revolt if AI isn’t a part of their toolbelt.

Either way, AI technologies are finding their ways into the hands of local law enforcement. This month, at least two police agencies in Oregon expanded their use of AI to write police reports.

“I think the danger is using these tools without the side rails,” said Kevin Barton, the district attorney in Washington County, Oregon. “If a community is going to consider using them, in my opinion, the only responsible way to do that is to make sure that you always prioritize the human being as the final say.”

Meanwhile, one prominent western company, Axon — based in Arizona — is at the forefront of law enforcement’s “AI era,” and is offering police departments financial incentives to get on board.

“What was once just a recording device is now a completely connected communications support tool,” Axon CEO Rick Smith, dressed in a Jobsian black turtle neck and dark slacks, said on stage at the company’s trade show in 2025. “It’s an AI-powered companion that just sits right on your chest.”

Axon, the everything app

On March 22, 2017, police in Snohomish County, Washington, entered the home of Alex Dold, aiming to break up a domestic dispute. Dold had been off his medication for schizophrenia for months, his sister told KOMO News.

The family called police to get Dold into treatment for his mental health, but at the scene, deputies wrestled with him and used a Taser to shock him into submission. Dold — just 29-years-old — died from cardiac arrest. His case was one of many across the country in which the “less lethal” weapon became plainly lethal.2

By the next month Taser International, the device’s maker, had rebranded to the name of its body camera line, Axon.3

Axon started offering a deal to police departments, which were clamoring for body-worn cameras after high-profile police killings of Black men had drawn national protests: take a free camera for each of your officers for a year.

The catch came when the free trial ended.

“You’ve bought in. You now can’t get your video out if you don’t keep with their system, because now it is their proprietary system that holds the video,” Musa said. “It snowballs from there.”

Over the past two years, Axon has rapidly pushed AI into those same body cameras they put onto the chests of hundreds of thousands of police officers. Motorola, another major player in the police body camera business, has also implemented AI in the past two years.

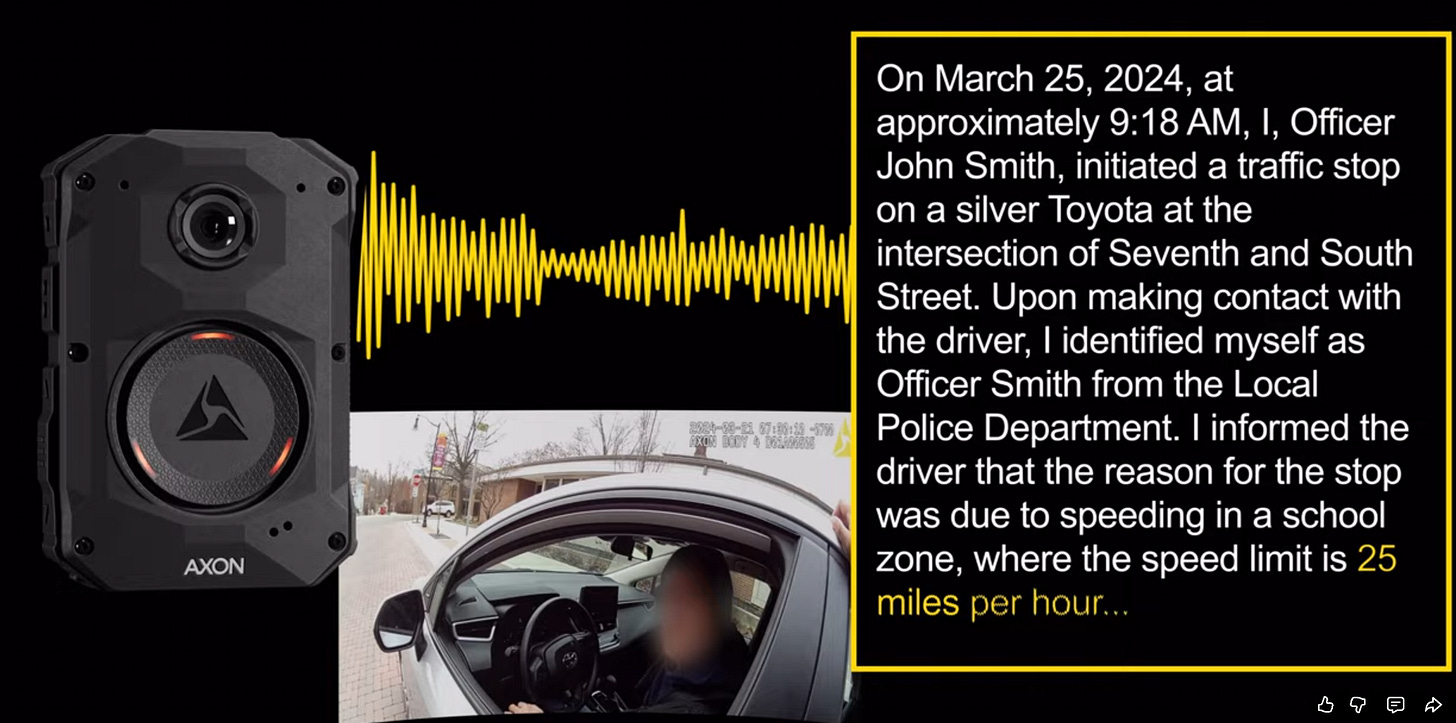

The Western Edge found Axon has approached many agencies in the past year about a piece of AI-powered software called Draft One, which writes the first draft of police reports based on the audio captured with its body cameras. Axon did not respond to a series of questions for this story.

Portland Police Bureau spokesman Sgt. Kevin Allen said his agency has discussed using Draft One, but felt the technology was “too early” to experiment with. Law enforcement in both large cities like Tacoma, Washington, and smaller cities like Pendleton, Oregon, said they flat out have no interest in AI.4

But police departments in some cities have embraced the technology.

The Medford Police Department, in Southern Oregon, was among the first agencies in the Northwest to automate their report writing with Draft One.

“There was hesitancy,” Sgt. Geoff Kirkpatrick, Medford’s public information officer said in an interview, “but it was very quickly that people were like, ‘If you take this away from us, we will revolt.’ It’s incredible.”

Medford first allowed a select number of officers to use Draft One in August 2024.5 Within three months of starting that testing, the department let all of its officers draft any police report using AI.

“What I was seeing consistently was (the reports) were more detailed than sometimes what you would see in police reports,” Kirkpatrick said.

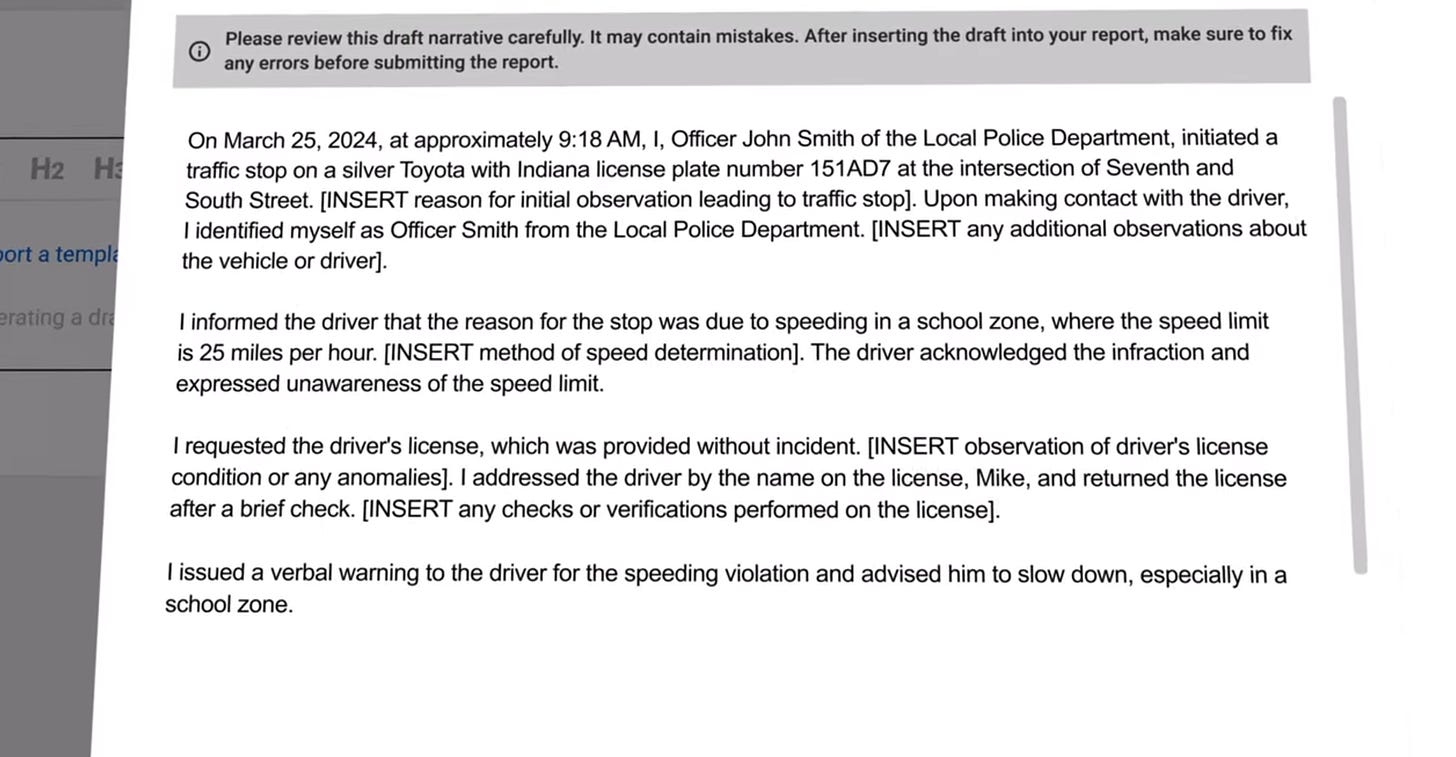

Medford enabled some safeguards offered by the Draft One system, such as inserting gibberish into a report to ensure officers must read and remove it. Medford police also set a minimum percentage of information that officers must change from the initial AI draft before a report can move forward. The reports, according to Kirkpatrick, are also labeled as being created with Draft One.6

The AI has been a “game changer” for Medford police, as officers have reported saving significant time writing accounts of their public encounters, Kirkpatrick added.

Justin Rosas, a criminal defense lawyer in Medford, believes the local department is trading people’s civil liberties to save time. When Draft One uses audio from a body camera to create a report, it includes bracketed suggestions where an officer might need to fill in more information. Officers who spoke to The Western Edge universally described this as good, a requirement that forces them to closely read the AI-generated text.

In some cases, the program may even recommend adding particular information that could bolster the case. That’s where defense lawyers have concerns about the AI. Arrests and criminal charges are based on probable cause, or an officer’s own reasonable suspicion at the time they take a person into custody. Lawyers like Rosas don’t want AI to fill in the gaps in an officer’s own perception of a situation after an arrest.

“That is so problematic because it’s not in (the officer’s) mind at the time,” Rosas said. “That’s where we get into whether it’s an unreasonable search or seizure.”

Law enforcement who are using Draft One and spoke to The Western Edge downplayed probable cause concerns, noting that officers working without AI can simply watch their own body camera footage if they need to fill in gaps in their report. That’s not the same as AI doing it for you, Rosas said.

“You’re going to have all sorts of cases where the actual information that was in the law enforcement officer’s mind at the time would not be probable cause,” he said.

Rosas worries that Axon is embedding AI into police departments across the country before lawyers have a chance to understand its ramifications for civil liberties. That includes using AI to comb through Ring cameras7 when crimes happen. In October, Axon announced it was partnering with the Amazon-owned company so police departments could more easily tap into the privately-owned cameras. Giving police access to your Ring camera is voluntary, but once a camera owner signs up, the AI can take over, according to Rosas.

“You’ve got this combination of Flock cameras and Ring cameras registered into this national database that Axon’s running, and they’re running a ChatGPT-based AI platform through it to look for suspicious movements,” Rosas said. “You’re getting this sort of automated suspicion.”

He’s also worried about bias that’s deeply coded into systems like ChatGPT because of the material AI trains upon.8 (If you’re concerned, Axon studied its own program in a “double-blind” study with unnamed experts and found Draft One is unbiased.)

Several defense attorneys and investigators who spoke to The Western Edge said adopting programs like Draft One too quickly entrenches Axon and its AI into the criminal justice system in ways that could be difficult to unravel if problems do arise.

“By the time anybody really knows anything about it, it’s like, ‘Oh, we’ve already been using this everywhere and it’s accurate. You didn’t even notice,’” Rosas said. “Then, the tool survives without actual criticism because it’s prevalent.”

A tiered approach

In September 2024, the King County Prosecuting Attorney’s Office in Seattle did not water down its criticisms of Draft One.

“It alone decides what parts of the audio are unintelligible. It has ‘hallucinations’ (errors) both large and small. It does not track its rate of errors, or how many errors an officer fixed in prior drafts,” Chief Deputy prosecutor Daniel Clark wrote in a memo to police departments in Washington state’s most populous county, telling them to never submit a report using AI.

“In one example we have seen, an otherwise excellent report included a reference to an officer who was not even at the scene.”

Kevin Barton, the prosecutor in Washington County, Oregon, said King County’s memo was on his mind when the local sheriff’s department asked if it could try out Draft One.

“My initial reaction was a strong ‘no way,’” he told The Western Edge. “I immediately thought about all the ways that it could go wrong.”

In the early fall of last year, Barton sent his own memo telling local law enforcement officers to stay away from AI in their work.

But Barton quickly began to reconsider his hard line after speaking to the Los Angeles County District Attorney’s office, where prosecutors learned about Draft One only after local police began using it without their knowledge. Barton imagined what would happen if he had an AI police report land on his desk without any guardrails in place.

“What we wanted to do was avoid that dynamic where a decision is made elsewhere and then we are living with the results,” he said.

In October, Barton signed off on a phased trial of Draft One at the Washington County Sheriff’s office. The stipulations: only a few deputies could use the program and only on low level cases. But those restrictions proved too limiting, and data from that trial didn’t show much. In December, Barton broadened the test, allowing more deputies to use the software on more charges, including intoxicated driving, retail theft and warrant arrests.9

He again widened the scope earlier this month, on March 10, extending Draft One use to all misdemeanor crimes and a handful of felony charges.10 If Draft One is used in creating a police report, the report in Washington County carries a clear label stating the AI was involved.

“So far, I’ve heard no negatives,” Barton said. “Nothing from the patrol officers or the defense bar or even prosecutors. I’ve only heard positives anecdotally.”

The Bend Police Department in Central Oregon has given a green light to a similar rollout of the program. Bend officers started using Draft One on a limited basis in June 2025 after Axon made an irresistible offer: try out two years of Draft One for free.11 Bend, which saved more than $100,000 for its own budget with the deal, soon had AI working alongside a small group of officers.

“Speaking candidly,” police Capt. Nicholas Parker said, “that first pilot group didn’t really care for it.”

The tests started with cases where officers didn’t have a suspect, such as car break-ins, because it meant the crime was unlikely to go to a prosecutor. Experienced officers using Draft One for low-level cases found the AI program spat out lengthy reports that they spent more time editing than if they had written it themselves.

In November, the department switched to testing the program with newer officers, who liked it. Draft One helped organize their thoughts into a clear report narrative – an essential skill for officers called to testify in court.

“We’re seeing a ton of hallucinations from a ton of departments using Draft One,” Cheng said, pointing to a report late last year that erroneously claimed an officer in Heber City, Utah, turned into a frog; the Axon camera audio captured a children’s movie playing in the background.

“It helped,” Parker said, “but they’re not developing that skill at the same time.”

On March 26, Bend police entered the last phase of its trial and opened the program to any officer in the department who wanted to try it. Officers can’t use it on the most serious cases - arson, homicides, sex crimes - but it will be the widest use of Draft One the department has seen.

Parker isn’t sure if his agency will keep Draft One after the free trial ends, however. He’s told officers that anything generated by AI needs to be reviewed by a person. If Draft One makes serious mistakes or doesn’t save officers time, Bend may decide to drop the program.12

The future of AI

Axon is not the only company traveling to police departments across the country to proselytize officers on the wonders of AI.

Wandering the streets of Elizabeth, New Jersey, on a cold winter morning ahead of a meeting with the city’s police chief, George Cheng explained how he dropped out of Massachusetts Institute of Technology with a handful of venture capital cash from Silicon Valley and a dream to bring AI to more police departments.

Cheng, a co-founder of the nascent company Code Four, designed an artificial intelligence system for body cameras that goes beyond Draft One’s capabilities, using both video and audio from the cameras to create police reports.

“We’re seeing a ton of hallucinations from a ton of departments using Draft One,” Cheng said, pointing to a report late last year that erroneously claimed an officer in Heber City, Utah, turned into a frog; the Axon camera audio captured a children’s movie playing in the background.

“Our AI understands that like, ‘Hey, this background noise isn’t necessarily relevant,’” Cheng said of his company’s technology.

Cheng sees a future where police records and tools consolidate into “one or two softwares” that write reports, handle paperwork and communicate across the criminal justice system. Code Four wants to be one of those softwares. He believes his company has an advantage in the next wave of AI in policing: Code Four has worked on video intelligence tools for Palantir, the massive data analytics company most recently in the news for its surveillance work with the U.S. government.

Axon also has broader ambitions. Its recent marketing materials tout how the company’s body cameras will soon have livestreaming options and the ability to connect law enforcement to cameras in retail and health care settings. Some Northwest police departments, such as Medford, are ready to handle more police work with AI, too. They’d like to see officers using Axon’s live language translation services next, and then have officers receive advice on legal precedent from the AI inside their body cameras.

Musa, the Fourth Amendment Center director, wants more consideration before police departments buy into an AI-powered future promised by technology companies trying to make money.

The very companies pushing this technology have made big promises before, she said, pointing to how body cameras became ubiquitous among police departments on a promise they would reduce the number of fatal interactions between officers and the public. Those deaths have only increased since Axon started cornering the body camera market in 2017.13

“When you have a systemic problem,” Musa said, “you’re not going to solve it by putting technology on it.”

The review of Pratt’s performance is particularly scathing on RogerEbert.com. “Pratt is immobilized, and his emoting isn’t always entirely convincing. You can see him…trying.”

Between 2006 and 2020, at least fifteen people died after being tased by police around the state of Oregon. In most cases, causes of death were ascribed to drug use or mental illness, not tasing. In 2006, Ashland Police tased 24-year-old Southern Oregon University student Nicholas Hansen, who died afterward. In 2010, Cornelius Police tased 24-year-old Daniel Barga, who had ingested hallucinogenic mushrooms before he was tased. Also in 2010, Clackamas County sheriffs tased 87-year-old Phyllis Owens, of Boring; her pacemaker stopped. In 2019, Albany, Oregon police tased James Plymell — whose car had broken down on the side of the road. He died at the scene.

Axons are a biological part of the human nervous system that conduct electrical signals essential to life.

Multnomah, Clackamas and Clark counties in the Portland metro area all said they are not using AI-assisted report writing at this time as well.

The Western Edge also surveyed many cities in Idaho, Oregon and Washington to determine if they had policies in place that regulate the use of AI by city employees. The policies varied wildly. One stretch of Interstate 90 showed that variety: Spokane allows AI use with guardrails, Spokane Valley bans employees from using it at all, and Coeur d’Alene had no policy whatsoever around AI. Medford was the only city surveyed that provided a separate policy for its police department. Most cities had policies that required human verification of AI outputs and banned putting people's personal information into AI at all.

Axon does not require police agencies to have any of these safeguards in place when using Draft One, according to its documentation.

Ring is owned by Seattle-based Amazon. A Super Bowl commercial this year ahead of the Seahawks’ national championship promoted the Ring network as a surveillance dragnet that could help find lost puppies. It was met with near universal derision.

Numerous studies have shown large language models like ChatGPT exhibit racial bias in health care, the legal profession, job hiring and many other fields, despite efforts by companies like OpenAI, Google and Microsoft to limit these outputs.

A full list of possible charges under that pilot included:

(1) Felony and Misdemeanor Driving Under the Influence of Intoxicants (DUII) (including non-injury crashes)

(2) Felony and Misdemeanor Driving While Suspended (DWS)

(3) Misdemeanor Failure to Perform the Duties of a Driver (Hit and Run-Property)

(4) Reckless Driving

(5) Felony and Misdemeanor Criminal Mischief (no DV or Family Violence)

(6) Felony and Misdemeanor Retail Theft (non-employment theft from a business)

(7) Felony and Misdemeanor Failure to Report as a Sex Offender

(8) Giving False Information to a Police Officer (criminal and traffic)

(9) Misdemeanor controlled substance crimes (PCS)

(10) Throwing Lighted Material and Offensive Littering

(11) Warrant Arrests

The latest list of charges allowable under Draft One use:

(1) All misdemeanor crimes

(2) Felony Driving Under the Influence of Intoxicants (DUII)

(3) Felony Driving While Suspended (DWS)

(4) Criminal Mischief in the First Degree

(5) Felony Retail Theft (non-employment theft from a business)

(6) Felony Failure to Report as a Sex Offender

(7) Warrant Arrests

Axon has made billions of dollars on a broad range of products since 2017, when it started offering try now, pay later programs on its body cameras.

Some studies of AI-assisted police reports have found no significant time savings for officers, though officers do perceive a lighter workload. Officers and prosecutors who spoke to The Western Edge said they believed Draft One saved significant time on the job.

Great reporting,I hope you'll keep tracking this issue as more departments come up against this choice. There's some haunting footage of the leadership at Axon in the documentary "All Light Everywhere" that I think about whenever I see their company pop up in the news.

Neil deGrasse Tyson has said that the most likely to happen sci-fi film (cuz we were [are] already living it in many ways) is “The Minority Report.” oh I could say so much more, about “predicting” terror B*S* etc. but I don’t have the time. ➡️ NO AI in policing‼️ LEOs in all levels of gov’t ALREADY can’t handle the mere basics‼️‼️‼️